Adobe launched video generation capabilities for its Firefly AI platform ahead of its Adobe MAX event on Monday. Starting today, users can test out Firefly’s video generator for the first time on Adobe’s website, or try out its new AI-powered video feature, Generative Extend, in the Premiere Pro beta app.

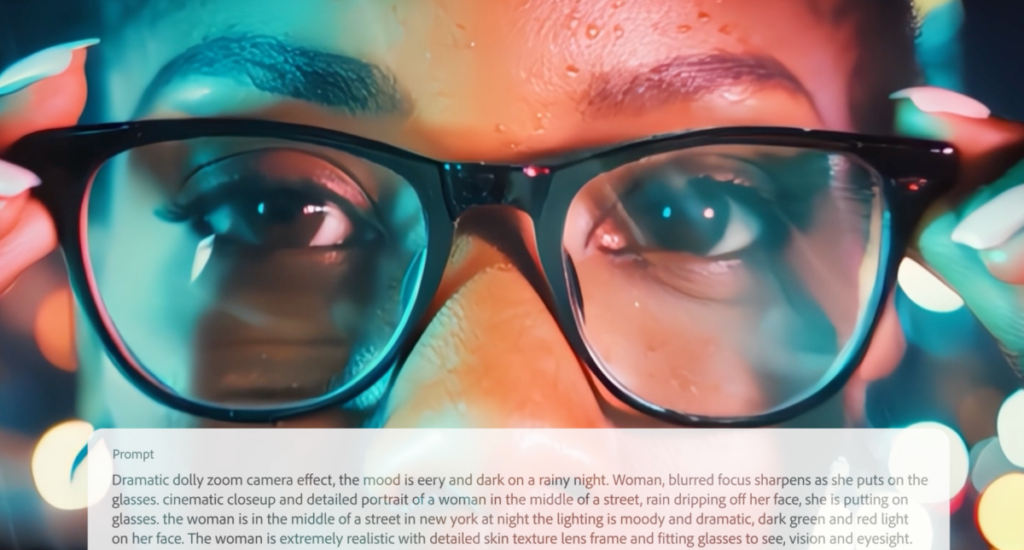

On the Firefly website, users can try out a text-to-video model or an image-to-video model, both producing up to five seconds of AI-generated video. (The web beta is free to use, but likely has rate limits.)

Adobe says it trained Firefly to create both animated content and photo-realistic media, depending on the specifications of a prompt. Firefly is also capable of producing videos with text, in theory at least, which is something AI image generators have historically struggled to produce. The Firefly video web app includes settings to toggle camera pans, the intensity of the camera’s movement, angle, and shot size.

In the Premiere Pro beta app, users can try out Firefly’s Generative Extend feature to extend video clips by up to two seconds. The feature is designed to generate an extra beat in a scene, continuing camera motion and the subject’s movements. The background audio will also be extended — the public’s first taste of the AI audio model Adobe has been quietly working on. The background audio extender will not recreate voices or music, however, to avoid copyright lawsuits from record labels.

In demos shared with TechCrunch ahead of the launch, Firefly’s Generative Extend feature produced more impressive videos than its text-to-video model, and seemed more practical. The text-to-video and image-to-video model don’t quite have the same polish or wow factor as Adobe’s competitors in AI video, such as Runway’s Gen-3 Alpha or OpenAI’s Sora (though admittedly, the latter has yet to ship). Adobe says it put more focus on AI editing features than generating AI videos, likely to please its user base.

Adobe’s AI features have to strike a delicate balance with its creative audience. It’s trying to lead in a crowded space of AI startups and tech companies demoing impressive AI models. On the other hand, lots of creatives aren’t happy that AI features may soon replace the work they’ve done with their mouse, keyboard, and stylus for decades. That’s why Adobe’s first Firefly video feature, Generative Extend, uses AI to solve an existing problem for video editors – your clip isn’t long enough – instead of generating new video from scratch.

“Our audience is the most pixel perfect audience on Earth,” said Adobe’s VP of generative AI, Alexandru Costin, in an interview with TechCrunch. “They want AI to help them extend the assets they have, create variations of them, or edit them, versus generating new assets. So for us, it’s very important to do generative editing first, and then generative creation.”

Production-grade video models that make editing easier: that’s the recipe Adobe found early success with for Firefly’s image model in Photoshop. Adobe executives previously said Photoshop’s Generative Fill feature is one of the most used new features of the last decade, largely because it complements and speeds up existing workflows. The company hopes it can replicate that success with video.

Adobe is trying to be mindful to creatives, reportedly paying photographers and artists $3 for every minute of video they submit to train its Firefly AI model. That said, many creatives are still wary of using AI tools, or fear that they will make them obsolete. (Adobe also announced AI tools for advertisers to automatically generate content on Monday.)

Costin tells these concerned creatives that generative AI tools will create more demand for their work, not less: “If you think about the needs of companies wanting to create individualized and hyper personalized content for any user interacting with them, it’s infinite demand.”

Adobe’s AI lead says people should consider how other technological revolutions have benefited creatives, comparing the onset of AI tools to digital publishing and digital photography. He notes how these breakthroughs were originally seen as a threat, and says if creatives reject AI, they’re going to have a difficult time.

“Take advantage of generative capabilities to uplevel, upskill, and become a creative professional that can create 100 times more content using these tools,” said Costin. “The need of content is there, now you can do it without sacrificing your life. Embrace the tech. This is the new digital literacy.”

Firefly will also automatically insert “AI-generated” watermarks in the metadata of videos created this way. Meta uses identification tools on Instagram and Facebook to label media with these labels as AI-generated. The idea is that platforms or individuals can use AI identification tools like this, as long as content contains the appropriate metadata watermarks, to determine what is and isn’t authentic. However, Adobe’s videos will not by default have visible labels clarifying they are AI generated, in a way that’s easily read by humans.

Adobe specifically designed Firefly to generate “commercially safe” media. The company says it did not train Firefly on images and videos including drugs, nudity, violence, political figures, or copyrighted materials. In theory, this should mean that Firefly’s video generator will not create “unsafe” videos. Now that the internet has free access to Firefly’s video model, we’ll see if that’s true.