Adobe says it’s building an AI model to generate video. But it’s not revealing when this model will launch, exactly — or much about it besides the fact that it exists.

Offered as an answer of sorts to OpenAI’s Sora, Google’s Imagen 2 and models from the growing number of startups in the nascent generative AI video space, Adobe’s model — a part of the company’s expanding Firefly family of generative AI products — will make its way into Premiere Pro, Adobe’s flagship video editing suite, sometime later this year, Adobe says.

Like many generative AI video tools today, Adobe’s model creates footage from scratch (either a prompt or reference images) — and it powers three new features in Premiere Pro: object addition, object removal and generative extend.

They’re pretty self-explanatory.

Object addition lets users select a segment of a video clip — the upper third, say, or lower-left corner — and enter a prompt to insert objects within that segment. In a briefing with TechCrunch, an Adobe spokesperson showed a still of a real-world briefcase filled with diamonds generated by Adobe’s model.

Image Credits: AI-generated diamonds, courtesy of Adobe.

Object removal removes objects from clips, like boom mics or coffee cups in the background of a shot.

Removing objects with AI. Notice the results aren’t quite perfect. Image Credits: Adobe

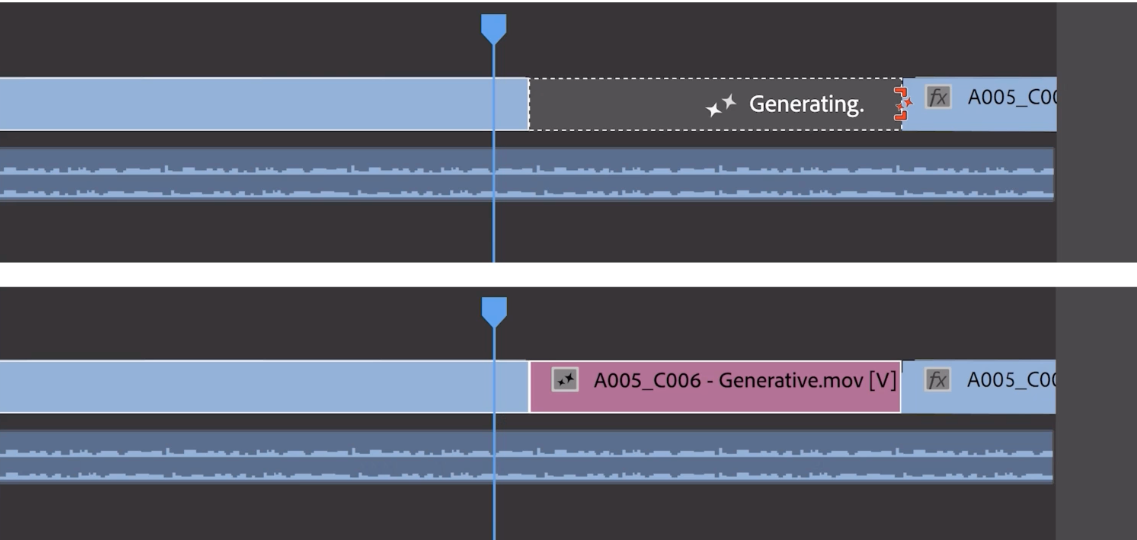

As for generative extend, it adds a few frames to the beginning or end of a clip (unfortunately, Adobe wouldn’t say how many frames). Generative extend isn’t meant to create whole scenes, but rather add buffer frames to sync up with a soundtrack or hold on to a shot for an extra beat — for instance to add emotional heft.

Image Credits: Adobe

To address the fear of deepfakes that inevitably crops up around generative AI tools such as these, Adobe says it’s bringing Content Credentials — metadata to identify AI-generated media — to Premiere. Content Credentials, a media provenance standard that Adobe backs through its Content Authenticity Initiative, were already in Photoshop and a component of Adobe’s image-generating Firefly models. In Premiere, they’ll indicate not only which content was AI-generated but which AI model was used to generate it.

I asked Adobe what data — images, videos and so on — were used to train the model. The company wouldn’t say, nor would it say how (or whether) it’s compensating contributors to the data set.

Last week, Bloomberg, citing sources familiar with the matter, reported that Adobe’s paying photographers and artists on its stock media platform, Adobe Stock, up to $120 for submitting short video clips to train its video generation model. The pay’s said to range from around $2.62 per minute of video to around $7.25 per minute depending on the submission, with higher-quality footage commanding correspondingly higher rates.

That’d be a departure from Adobe’s current arrangement with Adobe Stock artists and photographers whose work it’s using to train its image generation models. The company pays those contributors an annual bonus, not a one-time fee, depending on the volume of content they have in Stock and how it’s being used — albeit a bonus that’s subject to an opaque formula and not guaranteed from year to year.

Bloomberg’s reporting, if accurate, depicts an approach in stark contrast to that of generative AI video rivals like OpenAI, which is said to have scraped publicly available web data — including videos from YouTube — to train its models. YouTube’s CEO, Neal Mohan, recently said that use of YouTube videos to train OpenAI’s text-to-video generator would be an infraction of the platform’s terms of service, highlighting the legal tenuousness of OpenAI’s and others’ fair use argument.

Companies, including OpenAI, are being sued over allegations that they’re violating IP law by training their AI on copyrighted content without providing the owners credit or pay. Adobe seems to be intent on avoiding this end, like its sometime generative AI competition Shutterstock and Getty Images (which also have arrangements to license model training data), and — with its IP indemnity policy — positioning itself as a verifiably “safe” option for enterprise customers.

On the subject of payment, Adobe isn’t saying how much it’ll cost customers to use the upcoming video generation features in Premiere; presumably, pricing’s still being hashed out. But the company did reveal that the payment scheme will follow the generative credits system established with its early Firefly models.

For customers with a paid subscription to Adobe Creative Cloud, generative credits renew beginning each month, with allotments ranging from 25 to 1,000 per month depending on the plan. More complex workloads (e.g. higher-resolution generated images or multiple-image generations) require more credits, as a general rule.

The big question in my mind is, will Adobe’s AI-powered video features be worth whatever they end up costing?

The Firefly image generation models so far have been widely derided as underwhelming and flawed compared to Midjourney, OpenAI’s DALL-E 3 and other competing tools. The lack of release time frame on the video model doesn’t instill a lot of confidence that it’ll avoid the same fate. Neither does the fact that Adobe declined to show me live demos of object addition, object removal and generative extend — insisting instead on a prerecorded sizzle reel.

Perhaps to hedge its bets, Adobe says that it’s in talks with third-party vendors about integrating their video generation models into Premiere, as well, to power tools like generative extend and more.

One of those vendors is OpenAI.

Adobe says it’s collaborating with OpenAI on ways to bring Sora into the Premiere workflow. (An OpenAI tie-up makes sense given the AI startup’s overtures to Hollywood recently; tellingly, OpenAI CTO Mira Murati will be attending the Cannes Film Festival this year.) Other early partners include Pika, a startup building AI tools to generate and edit videos, and Runway, which was one of the first vendors market with a generative video model.

An Adobe spokesperson said the company would be open to working with others in the future.

Now, to be crystal clear, these integrations are more of a thought experiment than a working product at present. Adobe stressed to me repeatedly that they’re in “early preview” and “research” rather than a thing customers can expect to play with anytime soon.

And that, I’d say, captures the overall tone of Adobe’s generative video presser.

Adobe’s clearly trying to signal with these announcements that it’s thinking about generative video, if only in the preliminary sense. It’d be foolish not to — to be caught flat-footed in the generative AI race is to risk losing out on a valuable potential new revenue stream, assuming the economics eventually work out in Adobe’s favors. (AI models are costly to train, run and serve after all.)

But what it’s showing — concepts — isn’t super compelling frankly. With Sora in the wild and surely more innovations coming down the pipeline, the company has much to prove.